How to Compare AI Search Optimization Tools (Without Wasting Months on the Wrong One)

Over 50 AI search optimization tools have launched in under two years. Pricing ranges from free to $2,000+ per month. Every vendor claims to be the only platform you need. And if you're trying to figure out how to compare AI search optimization tools, you already know the problem isn't a shortage of options.

It's that nobody tells you how to actually evaluate them! So that's what this resource is for... not a list of options, it's a decision making rubric.

If you are looking for an awesome list of available tools, you can check out Graphite's AEO Tool List here.

We built this six-step comparison process from our own client work. It covers requirements auditing, evaluation criteria, real pricing analysis, structured trial testing, the switch-or-stay decision, and ROI tracking. By the end, you'll have a shortlist of 2-3 tools matched to your requirements, a testing plan for trial periods, and a framework for measuring ROI after purchase. No star ratings. No "top 10" listicles. Just a repeatable process that works.

Step 1: Audit Your Current Visibility Stack and Define Your Requirements

This step alone will eliminate 80% of the market before you ever open a demo. Most teams skip it entirely and wonder why their shiny new tool doesn't fit their workflow three months later.

Assess Where You Stand Today

Start by answering one question: are you building AI visibility tracking from scratch, or switching from an existing tool? The comparison criteria change depending on your starting point. Teams managing four separate tools to accomplish what one system should handle have different needs than teams with zero AI monitoring. That kind of tool fatigue is one of the most common complaints in user feedback across the category.

Write down what you're tracking now and what's missing. If the answer is "nothing" and "everything," that's fine. You're in the majority.

Separate Must-Haves from Nice-to-Haves

Walk through these five questions before you look at a single product page:

If you can answer those five questions, you've already eliminated half the market. Write your answers down. You'll use them as a filter in every step that follows.

The SEO vs. AEO Decision

Your requirements narrow fast here. Are you optimizing for traditional search, AI visibility, or both? That answer determines whether you need a purpose-built AEO tool (Profound, Peec AI, Otterly) or an SEO suite with AI visibility add-ons (Semrush, Ahrefs Brand Radar, SE Ranking).

Don't assume your existing SEO tool covers it. Kevin Indig's research found that none of the classic SEO metrics have strong relationships with AI citation rates. Tools like Surfer SEO and Clearscope are strong for traditional rankings but don't track whether optimized content gets cited in AI-generated answers. Tools built on keyword-ranking foundations may not capture what actually drives AI visibility.

Here's a quick decision guide for how to compare AI search optimization tools at this stage:

Step 2: Build Your Evaluation Criteria Around Data Accuracy

Two tools can track the exact same AI platform and return completely different results. That's not a bug. It's a consequence of how they collect data, and it's the most overlooked factor when you compare AI search optimization tools.

Why Data Collection Methodology Is the Real Differentiator

Tools using front-end UI scraping (simulating real user queries in the browser) produce fundamentally different results than tools relying on provider APIs. API outputs can differ from what actual users see. Rankability tested 22 AI search visibility tools and flagged data collection methodology as the single most important comparison criterion.

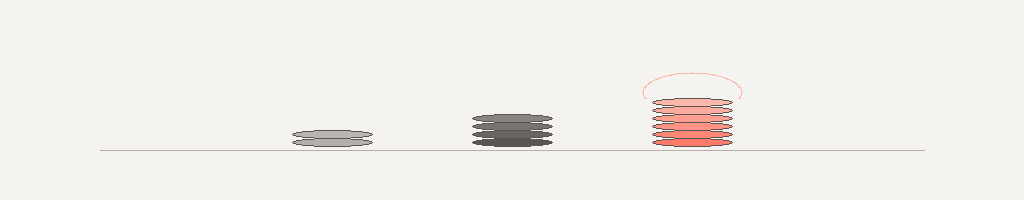

Three methods exist, and you should know which one any tool on your shortlist uses:

Ask every vendor which method they use. If they can't answer clearly, that's a red flag.

Platform Coverage Matters More Than You Think

ChatGPT answers overlap with Google search results just 12% of the time. A tool that only monitors one AI platform misses the vast majority of your visibility landscape. And that landscape is volatile: 40-60% of cited domains change month to month across major AI platforms, according to Profound's citation drift research.

When comparing AI SEO tools, evaluate three coverage dimensions:

The Monitoring vs. Optimization Divide

The most consistent complaint across GEO tools and AEO platforms is that dashboards show what's happening but not what to do about it. Many tools report problems without providing any path to fix them.

Split every tool into one of two buckets:

Platforms that bridge monitoring to content optimization score highest in real-world evaluations. If actionability is a must-have from Step 1, this criterion alone will cut your list significantly.

Build a Weighted Scorecard

Create a simple scoring template with weights adjusted to your Step 1 requirements:

Shift the weights based on what matters most to your team. If you're a Shopify brand that needs platform breadth, bump coverage to 30% and reduce integrations. The point is structured evaluation, not gut feel.

Feature lists sell tools. Data accuracy determines whether you'll still be using it in six months.

Step 3: Create a Shortlist Using Real Pricing Data (Not Marketing Pages)

Published pricing in the AEO space is misleading at best. Setup fees, per-domain charges, platform add-ons, and scaling costs can push the real price to 2-3x the number on the marketing page. AEO platforms typically cost 20-50% more than traditional SEO tools, and the hidden costs add up fast.

The Hidden Cost Problem

Beyond the monthly subscription, watch for these line items that don't show up until you're deep in a sales conversation:

One user reported that almost every useful feature triggered a popup asking for another $289/month. That's not a pricing model. That's a trap. Enterprise users flag a similar pattern with Semrush's AI Copilot: broad data but surface-level AEO recommendations, pushing teams to buy additional tools for AI visibility execution.

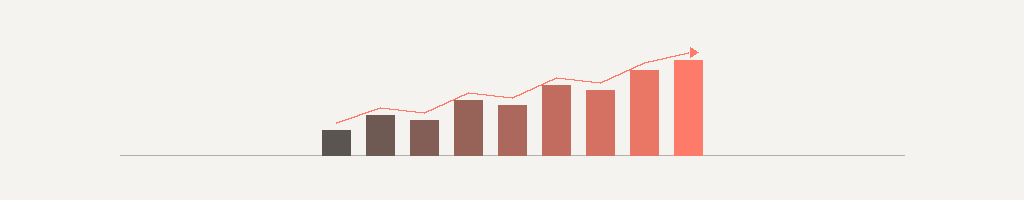

Real Pricing by Budget Tier

Here's what actual tool costs look like across three budget levels when you compare AI search optimization tools head-to-head:

Note the Semrush path: its AI Visibility Toolkit runs $99/month per domain on top of the $140+ base subscription. Two domains and you're at $338/month before you've tracked a single AI citation. Ahrefs Brand Radar, by contrast, is included with any plan starting at $129/month with no add-on fee.

Calculate Cost Per Query Tracked

Reframe the comparison from monthly subscription to value per insight. Ask each vendor:

A Shopify brand tracking three competitors across four AI platforms may hit the ceiling of a $79/month plan within weeks. This calculation reveals which tools deliver density of insight versus which ones spread thin at their price point.

Build Your Shortlist

Apply your Step 1 requirements, Step 2 weighted scorecard, and the real pricing above. Cut the list to 2-3 tools worth trialing. If nothing fits your budget and must-haves simultaneously, that's useful information too. The market is evolving quarterly, and a tool that fits may launch before your next budget cycle.

Step 4: Run a Structured Trial (Here's Exactly What to Test in 14 Days)

A 14-day free trial is only useful if you walk in with a plan. Most teams sign up, click around the dashboard for a few days, get pulled into other work, and make a decision based on first impressions. That's not evaluation. That's guessing.

Request 14-30 day trials from each tool on your shortlist. Then run these five tests on every single one.

Score as You Go

Pull out your weighted scorecard from Step 2 and score each tool during the trial, not after. Memory fades and first impressions are unreliable. Documented scores from structured tests are what you'll present to your team or leadership when it's decision time.

Run your trials in parallel, not sequentially. Testing two tools side-by-side on the same queries reveals differences that sequential testing hides.

Step 5: Make the Decision (and Know When Your Current Tool Is Good Enough)

What if the best decision is to not buy anything new? Every comparison guide assumes you'll switch tools. Sometimes the data says otherwise. Knowing when to compare AI search optimization tools and when to stop comparing is its own skill.

The Decision Matrix

Take your trial scores from Step 4 and map them against the requirements you defined in Step 1. The decision framework is straightforward:

When to Stay With What You Have

Over 50 AEO tools have launched in under two years. Many are less than a year old. Early-stage tools generate genuine excitement but often come with limited integrations and smaller data sets than advertised.

If your current setup covers 80% of your needs, calculate the switching cost honestly. Time spent onboarding a new platform, the learning curve for your team, data migration from your existing setup, and the inevitable productivity dip during transition all carry real costs. A 20% improvement in features might not justify three months of disruption.

The Future-Proofing Question

Before you commit, ask three questions about longevity:

The best tool is the one your team will actually use daily. A perfect platform that collects dust loses to a good-enough tool embedded in your workflow.

Step 6: Set Up ROI Tracking from Day One

AI search visitors convert at 4.4x the rate of traditional organic visitors. Content optimized for AI visibility achieves 6-7% conversion rates versus 3-4% for traditional SEO. And when Google's AI Overview appears, only 8% of users click on traditional results, compared to 15% without an AI summary. The ROI of AI visibility is there. Most teams just never set up the measurement to prove it.

The Four Metrics That Matter

Skip vanity dashboards. Track these four numbers monthly:

Build a 90-Day Baseline

Structure your first three months with the new tool around measurable milestones:

FAQ

What's the difference between AEO tools, GEO tools, and AI SEO tools?

These terms overlap significantly. AEO (Answer Engine Optimization) and GEO (Generative Engine Optimization) both focus on visibility in AI-generated answers. AI SEO tools is the broader category that includes traditional SEO platforms adding AI tracking features. Industry voices like Gianluca Fiorelli argue the distinction is branding, not substance. Focus on what a tool actually does, not which label it uses to describe itself.

Can I use free tools to compare AI search optimization platforms?

Yes. Start with HubSpot's AEO Grader (free, no signup) and Mangools AI Search Grader for a baseline snapshot. These won't give you ongoing monitoring, but they'll show where you stand right now. Free tiers from Otterly and SE Ranking provide limited ongoing tracking. Use free tools for your Step 1 audit before investing time in paid trials.

How often should I re-evaluate my AI search optimization tool?

Every six months minimum. The AEO space has 50+ tools, and many are less than a year old. New entrants, major feature updates, and pricing changes happen quarterly. Set a calendar reminder to re-run Steps 2 through 4 with your current tool scored against one new competitor each cycle. This keeps your evaluation current without turning it into a full-time project.

Do I need a separate AEO tool if I already use Semrush or Ahrefs?

Not necessarily. Semrush's AI Visibility Toolkit ($99/month add-on per domain) and Ahrefs Brand Radar (included at $129/month) both add AI tracking to existing SEO workflows. The trade-off is convenience versus depth. Purpose-built AEO tools offer deeper AI-specific insights but add another platform to manage. If tool fatigue is already a problem on your team, an add-on that keeps everything in one dashboard may be the smarter path.

What's the biggest mistake teams make when selecting a tool?

Comparing features instead of data accuracy. Two tools can track the same AI platform and return different results depending on their data collection method (UI scraping vs. API). One user discovered a tool claiming their brand wasn't present on AI platforms despite manually confirming mentions in ChatGPT. Always verify a tool's data against what you see in the actual AI platform before trusting it for strategic decisions. See Step 2 above for the full breakdown of data collection methods and why they matter.

Ready to be seen?